Navigating the Challenges and Ethical Considerations

As advancements in AI technology are enabling healthcare professionals to spot disease patterns earlier than ever, optimizing related aspects of patient health, like rest periods for recovery, can further enhance overall treatment effectiveness and outcomes – for more details, check out our Optimizing Rest Periods for Faster Strength Progress.

As technology continues to evolve, the integration of AI in healthcare is proving crucial for early disease detection, much like how mastering strength training fundamentals can enhance an athlete’s performance and prevent injuries – for more details, check out our Strength Training Fundamentals Every Athlete Should Master.

Innovation is exciting. But in healthcare, excitement without guardrails can do real harm. So let’s talk about the practical realities behind implementing AI responsibly.

Algorithmic Bias and Health Equity

Algorithmic bias happens when an AI system produces unfair outcomes because it was trained on limited or skewed data. For example, a widely cited 2019 Science study found that a healthcare algorithm underestimated the needs of Black patients because it used healthcare spending as a proxy for illness severity (Obermeyer et al., 2019).

To reduce this risk:

- Audit training datasets for demographic diversity.

- Regularly test outputs across race, gender, and age groups.

- Involve multidisciplinary review panels before deployment.

Some argue AI is “objective.” In reality, it reflects the data we feed it (garbage in, garbage out). Pro tip: build bias testing into procurement requirements, not just deployment.

Data Privacy and Security

Patient data isn’t just sensitive—it’s sacred. HIPAA compliance (the U.S. standard for protecting health information) must be non-negotiable.

Action steps:

- Encrypt data in transit and at rest.

- Limit system access through role-based permissions.

- Conduct quarterly security audits.

Healthcare breaches cost an average of $10.93 million per incident (IBM, 2023). That’s not just expensive—it’s trust-shattering.

The ‘Black Box’ Problem

Some AI systems operate as “black boxes,” meaning even developers struggle to explain how conclusions are reached. In clinical care, that’s unsettling.

The solution? Explainable AI (XAI)—models designed to show reasoning pathways. For instance, instead of merely flagging a tumor, the system highlights image regions influencing the diagnosis. This transparency builds clinician confidence (and reduces that sci-fi “HAL 9000” vibe).

The Human Element

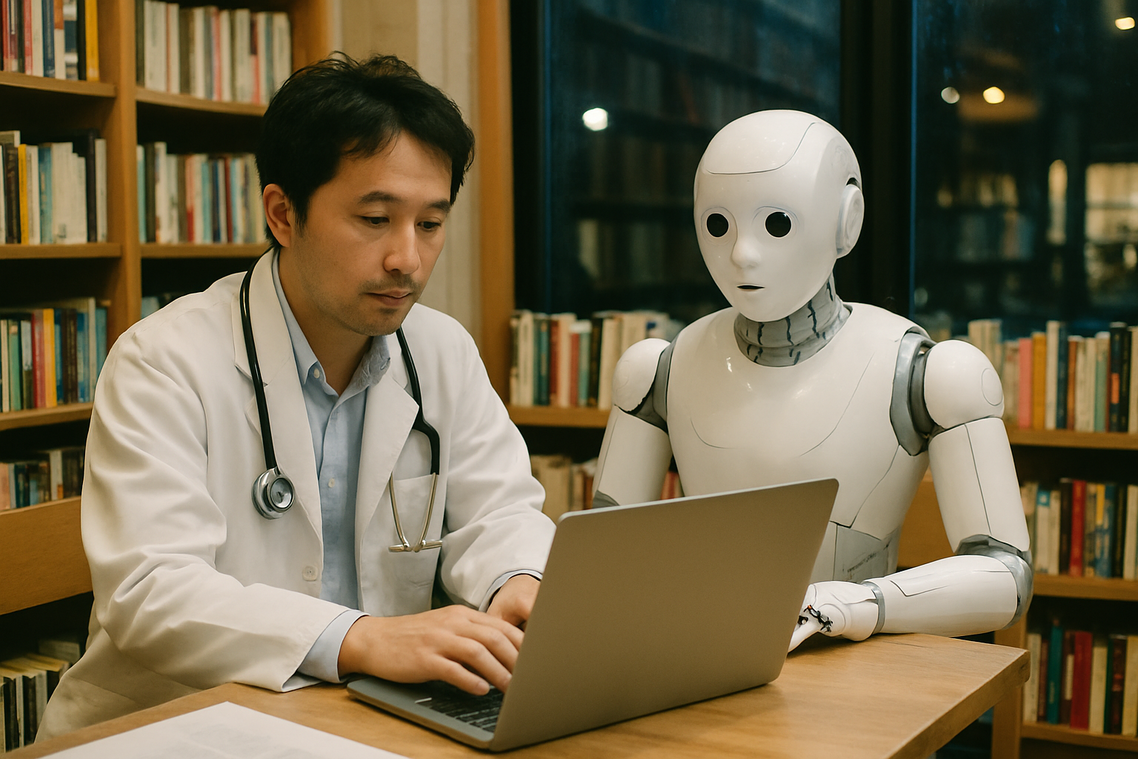

Finally, AI is a tool—not a replacement for clinical judgment. While ai in healthcare diagnostics can accelerate analysis, empathy and context remain human strengths.

Encourage adoption by:

- Offering hands-on training sessions.

- Positioning AI as decision support, not decision maker.

- Gathering clinician feedback early.

Because at the end of the day, technology should enhance care—not overshadow the people delivering it.

Augmenting Human Expertise for a Healthier Future

You came here to understand the what, how, and why behind implementing ai in healthcare diagnostics—from the technology powering it to the real-world impact and challenges that come with adoption. Now you have a clearer picture of how it works and why it matters.

The real advantage is undeniable. AI enhances diagnostic accuracy, increases speed, and improves efficiency—helping clinicians detect conditions earlier and deliver better patient outcomes. In a system where delays and misdiagnoses can cost lives, that edge makes all the difference.

But the future isn’t about replacing doctors. It’s about empowering them. When AI supports clinical expertise, the entire diagnostic process becomes smarter, faster, and more precise.

Healthcare is evolving quickly. Stay informed on the latest innovations shaping modern medicine so you’re never left behind. Follow emerging advancements, explore new tools, and remain proactive—because the future of better care starts with informed decisions today.

Ask Zyvaris Vasslor how they got into holistic wellness strategies and you'll probably get a longer answer than you expected. The short version: Zyvaris started doing it, got genuinely hooked, and at some point realized they had accumulated enough hard-won knowledge that it would be a waste not to share it. So they started writing.

What makes Zyvaris worth reading is that they skips the obvious stuff. Nobody needs another surface-level take on Holistic Wellness Strategies, Daily Workout Efficiency Hacks, ZayePro Mobility Optimization. What readers actually want is the nuance — the part that only becomes clear after you've made a few mistakes and figured out why. That's the territory Zyvaris operates in. The writing is direct, occasionally blunt, and always built around what's actually true rather than what sounds good in an article. They has little patience for filler, which means they's pieces tend to be denser with real information than the average post on the same subject.

Zyvaris doesn't write to impress anyone. They writes because they has things to say that they genuinely thinks people should hear. That motivation — basic as it sounds — produces something noticeably different from content written for clicks or word count. Readers pick up on it. The comments on Zyvaris's work tend to reflect that.

Ask Zyvaris Vasslor how they got into holistic wellness strategies and you'll probably get a longer answer than you expected. The short version: Zyvaris started doing it, got genuinely hooked, and at some point realized they had accumulated enough hard-won knowledge that it would be a waste not to share it. So they started writing.

What makes Zyvaris worth reading is that they skips the obvious stuff. Nobody needs another surface-level take on Holistic Wellness Strategies, Daily Workout Efficiency Hacks, ZayePro Mobility Optimization. What readers actually want is the nuance — the part that only becomes clear after you've made a few mistakes and figured out why. That's the territory Zyvaris operates in. The writing is direct, occasionally blunt, and always built around what's actually true rather than what sounds good in an article. They has little patience for filler, which means they's pieces tend to be denser with real information than the average post on the same subject.

Zyvaris doesn't write to impress anyone. They writes because they has things to say that they genuinely thinks people should hear. That motivation — basic as it sounds — produces something noticeably different from content written for clicks or word count. Readers pick up on it. The comments on Zyvaris's work tend to reflect that.